10.4 Overfitting to Predictors and External Validation

Section 3.5 introduced a simple example of the problem of overfitting the available data during selection of model tuning parameters. The example illustrated the risk of finding tuning parameter values that over-learn the relationship between the predictors and the outcome in the training set. When models over-interpret patterns in the training set, the predictive performance suffers with new data. The solution to this problem is to evaluate the tuning parameters on a data set that is not used to estimate the model parameters (via validation or assessment sets).

An analogous problem can occur when performing feature selection. For many data sets it is possible to find a subset of predictors that has good predictive performance on the training set but has poor performance when used on a test set or other new data set. The solution to this problem is similar to the solution to the problem of overfitting: feature selection needs to be part of the resampling process.

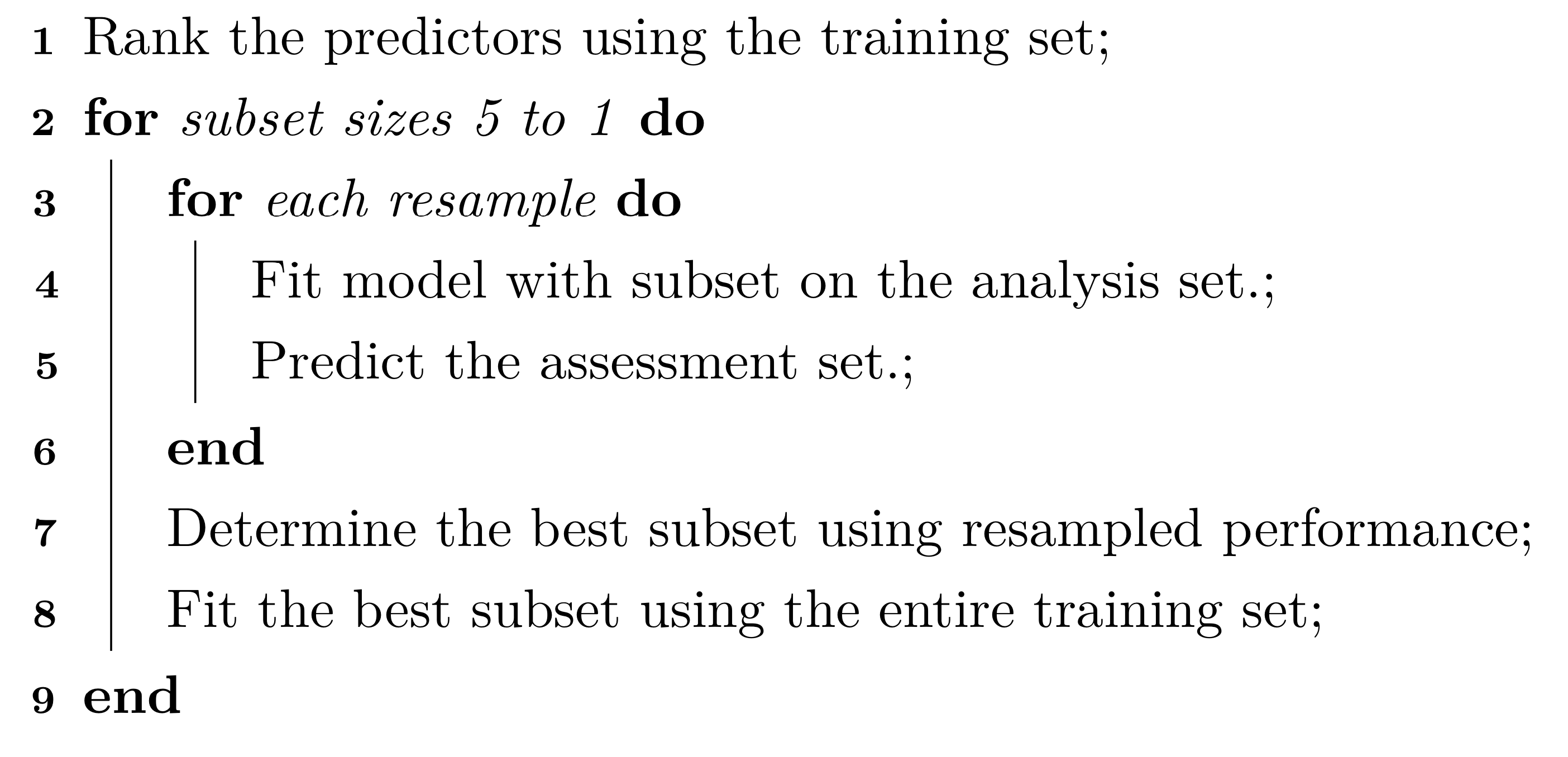

Unfortunately, despite the need, practitioners often combine feature selection and resampling inappropriately. The most common mistake is to only conduct resampling inside of the feature selection procedure. For example, suppose that there are five predictors in a model (labeled as A though E) and that each has an associated measure of importance derived from the training set (with A being the most important and E being the least). Applying backwards selection, the first model would contain all five predictors, the second would remove the least important (E in this example), and so on. A general outline of one approach is:

There are two key problems with this procedure:

Since the feature selection is external to the resampling, resampling cannot effectively measure the impact (good or bad) of the selection process. Here, resampling is not being exposed to the variation in the selection process and therefore cannot measure its impact.

The same data are being used to measure performance and to guide the direction of the selection routine. This is analogous to fitting a model to the training set and then re-predicting the same set to measure performance. There is an obvious bias that can occur if the model is able to closely fit the training data. Some sort of out-of-sample data are required to accurately determine how well the model is doing. If the selection process results in overfitting, there are no data remaining that could possibly inform us of the problem.

As a real example of this issue, Ambroise and McLachlan (2002) reanalyzed the results of Guyon et al. (2002) where high-dimensional classification data from a RNA expression microarray were modeled. In these analyses, the number of samples were low (i.e., less than 100) while the number of predictors was high (2K to 7K). Linear support vector machines were used to fit the data and backwards elimination (a.k.a. RFE) was used for feature selection. The original analysis used leave-one-out (LOO) cross validation with the scheme outlined above. In these analyses, LOO resampling reported error rates very close to zero. However, when a set of samples were held out and only used to measure performance, the error rates were measured to be 15%–20% higher. In one case, where the \(p:n\) ratio was at its worst, Ambroise and McLachlan (2002) shows that a zero LOO error rate could sill be achieved even when the class labels were scrambled.

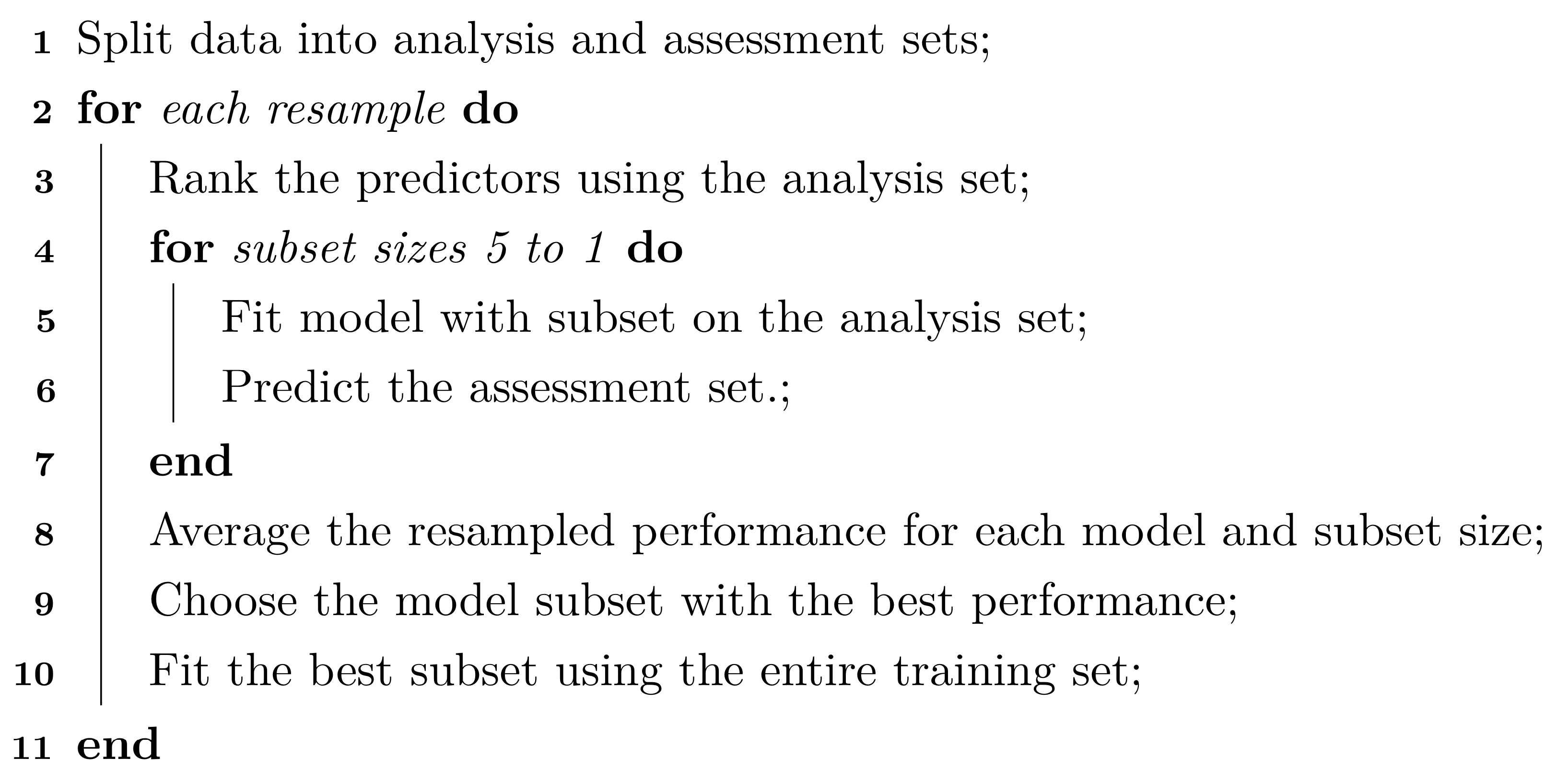

A better way of combining feature selection and resampling is to make feature selection a component of the modeling process. Feature selection should be incorporated the same way as preprocessing and other engineering tasks. What we mean is that that an appropriate way to perform feature selection is to do this inside of the resampling process81. Using the previous example with five predictors the algorithm for feature selection within resampling is modified to:

In this procedure, the same data set are not being used to determine both the optimal subset size and the predictors within the subset. Instead different versions of the data are used for each purpose. The external resampling loop is used to decide how far down the removal path that the selection process should go. This is then used to decide the subset size that should be used in the final model on the entire training set. Basically, the subset size is treated as a tuning parameter. In addition, separate data are then used to determine the direction that the selection path should take. The direction is determined by the variable rankings computed on analysis sets (inside of resampling) or the entire training set (for the final model).

Performing feature selection within the resampling process has two notable implications. The first implication is that the process provides a more realistic estimate of predictive performance. It may be hard to conceptualize the idea that different set of features may be selected during resampling. Recall, resampling forces the modeling process to use different data sets so that the variation in performance can be accurately measured. The results reflect different realizations of the entire modeling process. If the feature selection process is unstable, it is helpful to understand how noisy the results could be. In the end, resampling is used to measure the overall performance of the modeling process and tries to estimate what performance will be when the final model is fit to the training set with the best predictors. The second implication is an increase in computational burden82. For models that are sensitive to the choice of tuning parameters, there may be a need to retune the model when each subset is evaluated. In this case, a separate, nested resampling process is required to tune the model. In many cases, the computational costs may make the search tools practically infeasible.

In situations where the selection process is unstable, using multiple resamples is an expensive but worthwhile approach. This could happen if the data set were small, and/or if the number of features were large, or if an extreme class imbalances exist. These factors might yield different feature sets when the data are slightly changed. On the other extreme, large data sets tend to greatly reduce the risk of overfitting to the predictors during feature selection. In this case, using separate data splits for feature ranking/filtering, modeling, and evaluation can be both efficient and effective.